Yes, philanthropy should fund AI in government

The question isn't whether to use AI — it's whether we let it entrench the status quo or use it to finally put government in control of its own destiny

When I started Code for America1 in 2010, one question I heard a lot was: if you want to improve the services people get from government, why specify using the internet? It’s a fair question. People engage with government in all kinds of ways, and especially did then—in person, by mail, over the phone. So why insist on this particular technology?

My answer was that people expect to be able to use the internet to interact with their government. For a growing number of people, the internet was how they accessed almost every other service in their lives. When dealing with government meant in-person visits during working hours, and confusing, sometimes insulting paper-based forms, it not only cost people time they didn’t have, it also caused them to miss out on benefits they needed, to get in legal tangles when they failed to pay a ticket or comply with a regulation, and to generally feel that government was the enemy. These dynamics were clearly eroding trust in public institutions.

Later, I encountered Tom Loosemore’s definition of digital: “Applying the culture, processes, business models and technologies of the internet era to respond to people’s raised expectations.” I recognized in that definition the instinct behind Code for America: design government services around people’s real needs as those needs evolve, not around the convenience and habits of institutions. This was the service delivery version of Marci Harris’s “pacing problem.” Science and technology move fast, but government moves slowly, and this is now the source of a deep and fundamental problem in our society.

I share this as background to my take on an active discussion of the role of AI in government, spurred most recently by Erie Meyer’s piece in FedScoop on Friday. I love, admire, and deeply respect Erie, and I think she raises some valid concerns about philanthropic funders specifying AI as a condition of funding for government-related work. We absolutely should worry about hype cycles, misplaced priorities, and technosolutionism. When the emerging technology of the day was the blockchain, I shared with many in civic tech a contempt for that “solution in search of a problem.” But with AI, I think Erie and many others in this community are misreading key aspects of the need, the opportunity, and the role of philanthropy.

Raised expectations, again

People are already using AI to understand their medical bills, navigate insurance denials, draft appeals for benefits they were wrongly denied, and to parse lease agreements and court filings written in language no layperson was ever expected to understand. This tax season, Claude is scoring a lot of points by finding savings for people as they file their taxes. There’s been a lot of concern about how these tools might lead them astray, but folks like Dave Guarino are methodically testing how well they do at helping people navigate the complexity of programs like SNAP. Instead of warning that models could prompt you to claim a tax deduction that’s no longer legal, consumer advocates should be testing to what extent they actually do. The news from Dave on SNAP, for example, is that they’ve gotten quite good.

For years, we celebrated the transition from a forty-page PDF (or a barely disguised web version of the same) to an intuitive, well-designed web form that had been informed by proper user research—and rightly so. But expectations are changing again, even as there remain hundreds of thousands of forms (online and paper) that still need redesigning. Soon, people may expect to have a plain-language conversation with a system that asks questions in ways they understand and does the hard work for them. They’ll expect to apply for SNAP or file their taxes by uploading a paystub and letting the AI extract the data, calculate eligibility, and ask only the questions it can’t already answer and only the ones actually relevant to their situation. And they’ll expect it to ask those questions in ways that they understand. At some point, even a very well-designed web form will start to feel the way the forty-page PDF used to feel. And that shift may happen faster than the transition to internet-enabled services did.

Luukas Ilves and his co-authors make this point well in a paper from last year called the Agentic State. Luukas describes a future in which “public services flow directly around people’s lives.” Needs are anticipated and solutions assembled across agencies without the user ever having to understand which department does what. But the future is already here. It’s just, as William Gibson said, unevenly distributed. Ukraine has a national AI agent that lets you describe a need in plain language and the agent completes the workflow end-to-end, rather than routing you through forms. Imagine a US state doing this.

There’s a dark side of AI’s arrival that we have grapple with, too. It’s not only people seeking benefits or navigating permits who will use AI. AI-enabled fraud in unemployment insurance and other benefit programs has grown substantially in recent years, and the attack surface is expanding. Luukas is correct about the stakes. Governments that fail to develop their own agentic capabilities will find that bad actors “run circles around public administration, using agentic capabilities to achieve their goals.” The choice, as he frames it, isn’t between an AI-equipped government and a simpler, more human-scaled one. It’s between a government that develops its own capacity and one that gets outrun by the people exploiting its absence.

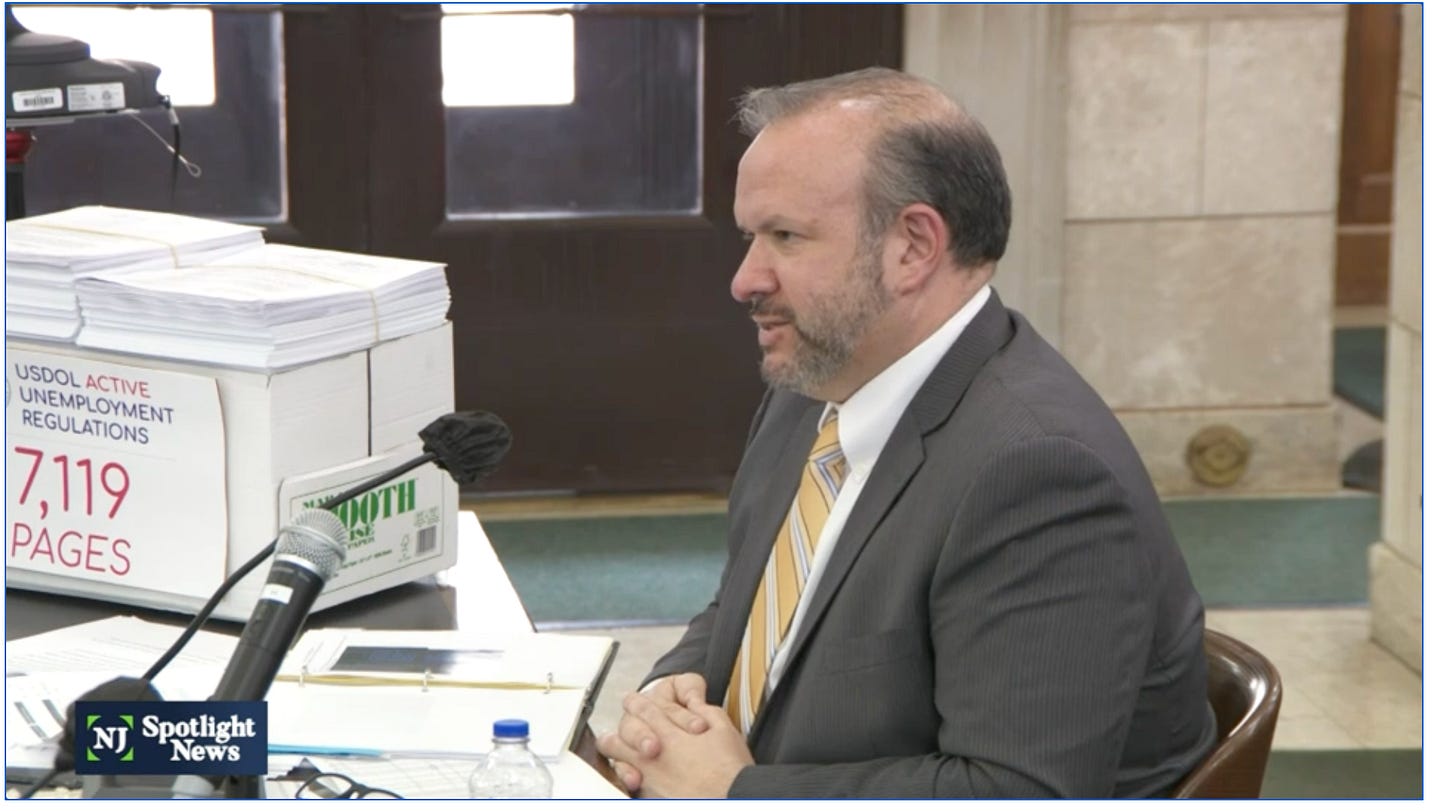

And AI can equip government for far more than benefits enrollment and fighting fraud. It is uniquely suited to the underlying problem that plagues government services: crippling policy cruft. When New Jersey’s Labor Commissioner Robert Asaro-Angelo was called to testify about his state’s unemployment insurance backlog, he brought along several boxes labeled “7,119 pages of active UI regulations” and set them in full view of the legislators. The technological issues his agency faced were a symptom. The cause was the sheer volume of rules that computer systems were supposed to operationalize. Agency guidance layered on regulations layered on statutes, each adding conditions and exceptions and cross-references, until the result is functionally impossible for an ordinary person, or even an ordinary caseworker, to navigate.

The field of civic tech has for too long accepted this complexity as a fixed constraint, in part, of course, because of the unhelpful norms that tell tech people to “get back in their lane” if they venture into policy. An AI-forward civic tech field would see enormous opportunity to change that. Just as the volume of rules is reaching entirely unsustainable levels, the tools to untangle and thoughtfully simplify them have arrived. Stanford RegLab’s Statutory Research Assistant (STARA) and other commercial options like those from Vulcan Technologies can read an entire regulatory regime, identify redundant, conflicting, vestigial, and simply unhelpful provisions, and return a map of the tangle that no human team is likely to produce. These tools can help us weigh tradeoffs, so we move away from frameworks that are the sum total of every conceivable idea that sounded good in the abstract but in aggregate make policy unimplementable at scale. And mapping the problem is just the first step. The Agentic State paper points toward something more ambitious: encoding rules as executable logic rather than ambiguous prose, allowing policymakers (and advocates, one would hope) to simulate how a proposed regulation would actually perform before enacting it. This means stress-testing effects, surfacing unintended consequences, and identifying the edge cases that turn well-intentioned rules into precisely the kind of tangle Commissioner Asaro-Angelo hauled into that hearing room. This work still relies on human expertise and judgement, but this is incredibly hard work that AI tools, and norms like rules as code, could make far easier.

AI can’t do anything about the political will to actually unwind and simplify these policies, regulations, and laws, but we haven’t really tested our leaders on this front. The way to find out if our law- and policymakers are willing to do the hard work of tidying up these messes is to hand them options, and see if they’re willing to make much-needed change. The pull of the status quo will be strong, so we should be relentless in teeing up opportunities for leaders to truly lead by using AI to rightsize these statutory and regulatory frameworks. If we use AI merely to navigate them, it will serve to excuse and enable further bloat. When benefits systems fail during times of need, it’s not the fault of the caseworkers, and it’s not even really the fault of the COBOL code everyone loves to blame. It’s the 7,119 pages. AI gives us a new set of tools to actually address the problem, if we choose to use them.

(This is just one example of where I hope civic tech advocates move as our world evolves: upstream. We must change the conditions under which technology in government is built and bought, not just the technology itself. Policy complexity is one of those crippling conditions, but there are others.)

AI has also given us a new set of tools to improve access to government services, especially for non-English speakers. US Digital Response, for example, has had enormous success helping governments use AI for translation. In New Jersey, so many more claims came in from ESL applicants after the translation work that the Commissioner (same one as pictured above!) was reportedly upset, realizing how many people must not have been applying before. This wouldn’t have happened without AI, but it also would have been unlikely to happen, at least when it did, without philanthropy investing in USDR to help the state and many other governments do the same. (See my point about philanthropy as catalyst at the end.)

The vendor argument runs both ways

Erie understandably worries that encouraging government to use AI tools sold by tech companies will deepen dependency and entrench the current dynamic. If “use AI” always means “buy an AI product from a large vendor and integrate it into your existing systems,” we could reproduce our current problems: more lock-in, more opacity, more contracts that agencies can’t escape. But that is a distinction her piece doesn’t quite make, and it’s an important one.

Consider what tools like Claude Code actually make possible. A manager in a state health and human services department needs to fix a bug in a benefits application—maybe something like the VA submit button Erie describes in her piece, where veterans couldn’t tell their form had gone through. Or she needs to build a new module to comply with a regulation that just changed. Today, she can’t do any of those things without getting budget approval, filing a change request, waiting months (sometimes years!), and paying the vendor an enormous sum. The system is taxpayer-funded and should be owned and operated by the government. In practice, the vendor usually guards access to it. But what if that manager could open an agentic coding tool and make the fix herself?

That may sound dangerous to anyone who’s seen Claude produce code with mistakes or vulnerabilities, but these tools are getting better quickly. At the very least, a small technical staff can do far more than they used to be able to do, putting self-sufficiency within closer reach. The problem of “no one understands the code base except the vendor” should no longer be a problem. Even if you don’t trust Claude to actually push the changes live, it can dramatically collapse the distance between where we are now, with government at the mercy of vendors, and where we could be, with government in control of its own destiny.

Technology is not the barrier to this future—it’s possible today. What makes it unlikely is the norms around who is allowed to touch a system, the way roles have been defined, the procurement rules that created the vendor relationship in the first place, and the contracts that give vendors ongoing control over systems built with public money. These are all aspects of a very broken status quo, one whose time should be up. In the best case, when a philanthropic funder issues a call for proposals encouraging the use of AI, they are creating incentives to overcome those barriers.

Think about how this could play out in the state Medicaid context. States are now implementing work requirements and will each spend tens of millions of dollars on IT systems to do so, with the money flowing to the same small set of vendors, on the same terms, with the same locked-in dependencies. Agentic coding tools could plausibly reduce those costs to near zero for agencies that develop the internal capacity to use them. Remember that these tools are getting better all the time. I find what they can do today remarkable; it’s hard to imagine how powerful they’re going to be if they get even better. If they can be part of a fundamental rebalancing of power between government and the vendor ecosystem, we should use them for that purpose.

AI tools sold by vendors could certainly deepen dependency. The answer is not to avoid AI, though. It’s to be specific about the kinds of AI adoption we’re trying to cultivate and the terms that government should set with its vendors. The kind that builds government’s own capacity is categorically different from the kind that adds another vendor to the stack and doubles down on lock-in.

Philanthropy’s role as catalyst

Lastly, I want to make sure we’re not conflating philanthropic strategy with government policy here. If agencies are requiring AI where its not needed, that’s bad. But philanthropists can’t (and aren’t trying to) dictate what technologies government adopts. The funds they’re dangling are minuscule compared to government IT budgets, but because in government it’s easier to get funding for a multi-hundred million dollar project than for a small test of something unproven, philanthropy is presumably trying to fill a gap.2

When I was starting Code for America, and I got all those questions about why we worked on online services, the other part of my answer was “because that’s what governments are struggling with.” We weren’t philanthropists, but we were raising philanthropic funds to do work in government. To Erie’s point, we would never have said “we only build Ruby on Rails apps” or “we only code in Java,” but we did bring resources to a specific kind of work that was tied to a novel technology because that technology was important and government was bad at leveraging it.

Just because some philanthropists want to encourage experimentation with AI doesn’t mean that even they think that all problems are solved with AI. I certainly don’t. They’re trying to play a unique, catalytic role, shaping the public sector to act more effectively in the public interest. One can believe that the work of debugging a submit button is enormously valuable and high leverage but not believe it’s the kind of thing that philanthropy should fund, for a whole host of reasons. My views on the value of the kind of work Erie describes haven’t changed, but questions of who should do and pay for what and how philanthropy responsibly shapes the public sector require more nuance than just a recognition of what’s valuable. And they require constant updating as progress happens, bottlenecks shift, and circumstances change. Just like the public’s expectations, philanthropic strategy evolves.

Erie’s piece ends with a question about who benefits from AI adoption in government. It’s the right question. The answer depends on what kinds of tools and how we buy and use them. Tools that paper over and excuse accumulated policy cruft may hurt; those that help simplify it may help. Vendors selling snake oil will hurt; models that empower government teams to own their own destinies should help. AI that digitizes the same broken experience in a new interface will hurt; AI that truly helps meet people’s raised expectations will help. To me, these are not reasons to sit on the sidelines. They are all reasons why philanthropy should try to shape this transition in the public interest.

Code for America is not responsible for anything I say here. I left as executive director in early 2020 and stepped down from the board in early 2023, so I certainly don’t speak for them.

I don’t have hard numbers on philanthropy’s investment in government AI, but federal government spends over $100B a year on tech, and then lord knows how much states and municipalities spend. These grants may be meant as incentives, but at $1M or less generally, they’re not very big incentives.

This is a Deja Vu moment for you in a very different context shaped by a technology that is much more capable, more widely spread/adopted and moving much faster. We also have a very different social/political context.

The Overton moment created by AI seems to merit a different approach than USDigital Response taken during the previous decade. Erie’s post and your response sound like the wrong place for dialogue. We need to meet the moment. You and Governments like the UK proved that digital services were worth funding in any way possible.

Government needs to move upstream, yes and get leaner and get proactive and predictive. I have not read all your work in the last year, and bring the dialogue to algorithmic transparency , data sovereignty and surveillance; we will be meeting the moment and the future. I know these are common platforms of yours and Tim’s. I wonder what is the right way to move through this given your learning from Code for America and from watching Doge. I do not like what they did or how they did it and it showed a “blitz scaling” approach with small teams. As you know, I was hopeful they would try to fix the system in strategic ways, but that was not their mission. What if your heart could lead a blitzscaling approach? I picture that like a beautiful phoenix.

I am so interested in a healthy, functioning governments like you describe. The AI moment seems to create this possibility.

Any thought worth sharing publicly? Pointers to past work would be great too.

The important distinction is not “AI in government” versus “no AI in government.”

It is whether AI increases sovereign capacity or deepens dependency.

A government that cannot understand, modify, or execute its own systems is already partially displaced.